In my last blog, “Human Error – We all make mistakes” (see here), I shared a personal experience involving DIY and a domestic electrical system. Fortunately, or perhaps I should say luckily, one where there was no bad outcome. We got away with it scot-free. The point I made in that blog was that human error was very much in evidence. And the particular human error illustrated was a mistake (from the human failures model in HSG 48: Reducing error and influencing behaviour).

Swiss Cheese

In this blog I would like to look under the bonnet of the event to look beyond the mistake that was committed. That mistake was, of course, the act of flicking the isolator switch from up (OFF) to down (ON).

If you look at the event through the lens of most accident theories or loss-causation models then this mistake is the obvious unsafe act. If there had been any serious injury or damage done during the work then this would be identifiable as the immediate cause.

Specifically if you look at the event through the lens of James Reason’s Active and Latent Failures or Swiss Cheese model then this mistake, this unsafe act, is the active failure. It is the human error made at the time of the incident and at the place of the incident by the people who are at the sharp end of the incident and are the most likely to be affected by it.

And as many people, including Reason, have pointed out, the people who are involved in active failures are often the ones who carry the can. That is, they get the blame for it. Because the blame game is often fun to play. To quote Reason: “Blaming individuals is emotionally more satisfying than targeting institutions”.

Latent Conditions

But, and this is the issue that lies at the heart of most causation models, while blaming individuals for active failures may be emotionally satisfying it does not prevent recurrence. Which is arguably one of the key objectives of any incident investigation.

Human failure is inevitable. What I mean by that is that people will fail to behave in ideal ways all of the time because they are human. This is not simply part of the “human condition”. It is hard wired into the way the universe works. Things degrade. Entropy. There isn’t a machine in existence that will not, at some stage, fail to behave ideally.

If human failure is inevitable then we have to accept this fact and design control systems that accept, accommodate and even anticipate human error. High reliability organisations (i.e. those that function error-free for long periods of time even when engaged in safety-critical operations, such as air traffic control) are mindful of this.

Reason’s Swiss Cheese model looks beyond the unsafe acts and human errors that lie at the sharp end of things to consider latent conditions. These are the imperfections that sit within the various control measures or defensive layers that form parts of the management system that protect people and assets from the hazards that would otherwise present significant risk.

Defensive Layers

In Reason’s model these defensive layers are many and various. They include control measures such as training and supervision (which are often determined by management within an individual organisation). They also include engineering control measures which have been designed into machinery, plant or buildings during design, construction or modification. These matters may, or may not, have been specified by, and under the control of, an organisation’s management. Importantly, these defensive layers also include matters such as statutory requirements, standards and regulatory oversight. These matters will not sit within the management of an organisation. Instead they are usually under the control of government and national and international bodies.

“Onions have layers, Swiss cheese has layers”

Reason imagines these defensive layers as layers of Swiss cheese. And like all good Swiss cheese these defensive layers will have holes in them. These holes are the imperfections or weaknesses in the defensive layer. But unlike Swiss cheese these holes are not fixed and immovable. They are dynamic. Each defensive layer will have weaknesses that move about and open and close as circumstances dictate. If a hole opens in one defensive layer then the hazard is able to penetrate through. But hopefully it will only get as far as the next defensive layer.

However, if the holes all align there comes a specific moment in time when circumstances permit the hazard to penetrate all of the defensive barriers. The latent conditions and the active failures align. And bang! The incident occurs.

There is an excellent short video that illustrates this idea in action (see here).

Safe by Design

To go back to the example that I described in my last blog. We (Dave and I) made a mistake. Yes. But there were also some interesting design characteristics in the consumer unit that facilitated our mistake. How come it was so easy to make the mistake? How come when you take the cover off the unit the switch appears to be in exactly the opposite condition to the one that it is actually in? How come the switch is OFF when it is up and ON when it is down? So anything dropped onto the switch will switch the unit ON (to a potentially dangerous condition) rather than OFF (to a safe one)?

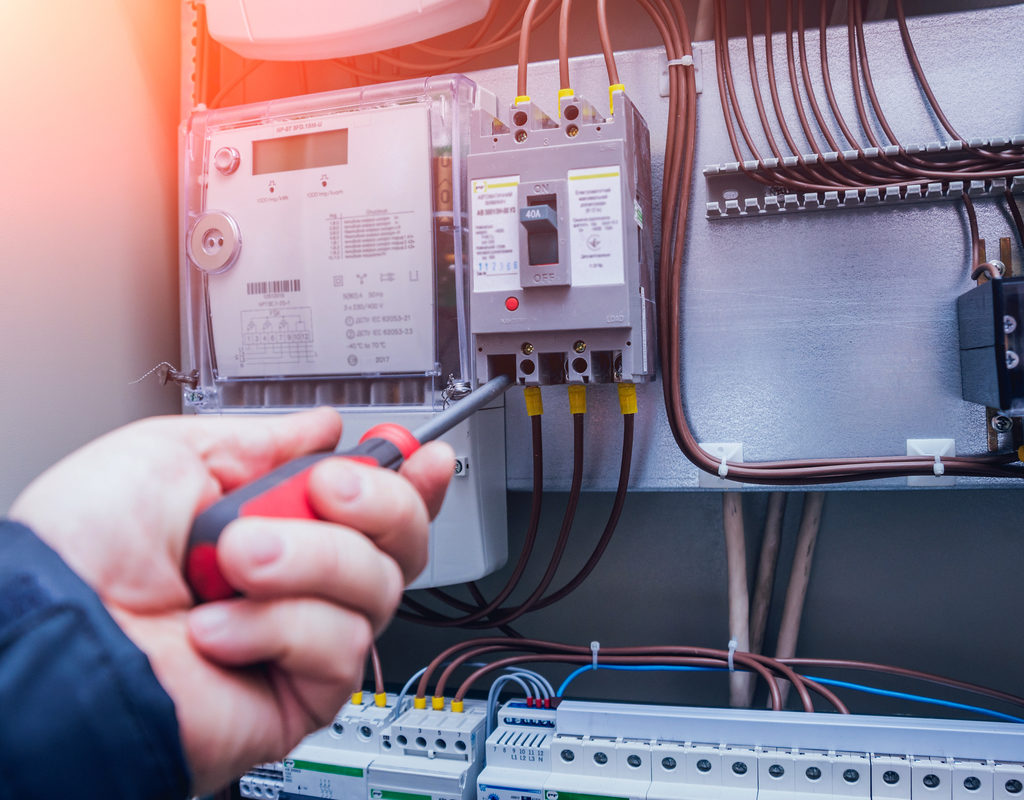

If you look at modern consumer units (see the photo at the top of this blog) they do not look like this and work like this. Partly because the technology has moved on – there will be miniature circuit breakers rather than fuses and a residual current device or two as well. But also improved design. The isolator switch is not partly disassembled by opening the unit cover. And all of the switches are ON in the up position and OFF in the down position. A modern unit has been designed to be safer.

My point is that one of the defensive layers used to have holes in it but those particular holes have now disappeared. They have been sealed up.

A Recurrent Theme

If you look at the background to the Kegworth air crash you will know that human error, or pilot error, was the active failure that caused the plane to crash. But sitting behind that active failure were various latent conditions that had nothing to do with the actions of the pilot or co-pilot on the day. Issues such as inadequate pilot training on new types of aircraft, inadequate safety testing on new aircraft engines under all flight conditions, poor cockpit display design and potentially significant conflicts of interest within regulatory bodies. All of these are explored in the documentary referenced in my last blog (or see here).

Piper Alpha has similar characteristics. A simple error committed by workers as the active failure. With latent failures built into the management system on the platform; with a dysfunctional p-t-w system in operation; poor design specification for the platform during modification, and questions asked about conflicts of interests within, and competence of, the regulator.

And the Herald of Free Enterprise disaster. And the Challenger disaster. And Deepwater Horizons, etc. …

The strength of Reason’s idea is that it can be flexibly applied to many major disasters and incidents and does not focus solely on causative factors that sit within a single organisation. It can be used to look at the entire control envelope.

History Repeats

Two years on from the Grenfell Tower fire, as the public enquiry moves from Phase 1 to Phase 2 (see the enquiry website here), and with the publication of Building a Safer Future, Dame Judith Hackitt’s independent review of building regulations and fire safety (see here for the report and here for IOSH magazine’s recent article), it is clear that latent conditions sat within the whole regulatory framework and that a faulty fridge-freezer was the least of it (see here for BBC report).

“Those who fail to learn the lesson of history are doomed to repeat it”

The challenge of the future is not to worry about the fire safety regulatory envelope, since that is already under intense scrutiny (and rightly so). Instead, the challenge is to spot different arenas where the regulatory envelope is equally “not fit for purpose” (to directly quote Dame Hackitt) before the next disaster happens.

To apply Reason’s logic pre-event rather than post-event.

–

Dr Jim Phelpstead BSc, PhD, CMIOSH

RRC Consultant Tutor